I took a quick look at cockpit (as packaged for Debian 11, ver 239-1), but decided to go back to Webmin.

Here are my thoughts, comparing the two:

Cockpit has better packaging in Debian 11: it was just an “apt install” away. (Webmin, in contrast, was a .deb downloaded from dodgy sourceforge servers - but there is an md5sum to verify authenticity).

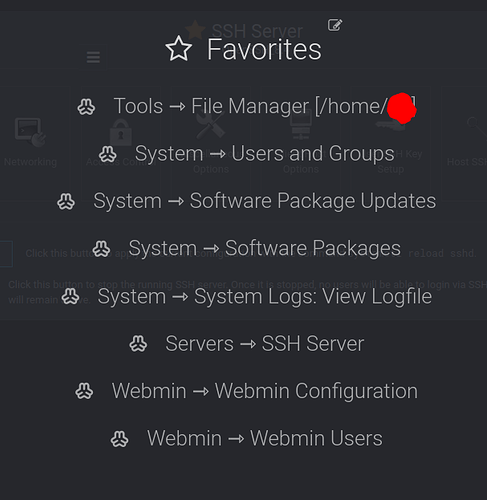

Cockpit’s UX was like 10x cleaner and more consistent than webmin. Cockpit’s UX was a much more modern web app (webmin will hurtle you into the past by 10 or 20 years… forget using webmin from a smartphone). Redhat did a good job designing Cockpit, albeit Cockpit has far fewer features (for example, I can’t find any place to monitor /var/log/syslog), than webmain. Like not enough features to cover all the basics. Webmin’s too many features (or at least, poorly-organized features from a UX perspective) are preferable to me, rather than having not enough features.

While it was possible to install a pre-1.0-release file manager into Cockpit, it had nowhere the maturity and feature-completeness, that the bundled file manager had in Webmin.

Webmin’s bundled file manager alone made it worth using! It had tabs, bookmarks (to favorite folders), RESTful URLs, etc.

Although Cockpit’s killer feature was the ability to administer podman containers, I highly doubt that it can do all the admin and maintenance features that a real-world admin would actually end up needing to use, over the longer term. A real-world admin will likely have to drop down to the command line anyway, to do all the things which cockpit doesn’t accommodate - so it’s sort of useless to use Cockpit at all if it doesn’t cover everything you’d need. I’m unaware of even one person (of the fellow geeks I know) who manages podman containers long term (like say, for 3 years running, even doing security updates, backups/restores, etc), entirely from Cockpit (or from Cockpit whatsoever).

Anyone out there, who loves and uses podman from Cockpit, and never needs to drop down to the CLI?